This Cat Does Not Exist: Ethics of Artificial Intelligence

February 11, 2020 12 min. read

Try AI-Driven Insights

Monitoring for Free

Discover new business ideas and growth opportunities using

our AI-powered insights monitoring tool

The developers of the popular ThisPersonDoesNotExist website taught the new neural network to generate photos of non-existent cats. However, instead of creating realistic depictions, the system produces photos of these cats that do not exist, featuring eerie and unsettling creatures.

Is it ethical for cats? Probably not. We do not know why they are still silent about this.

The main feature of systems based on artificial intelligence is considered to be their ability to make decisions autonomously, based on available data. Such a system can be, for example, an autopilot of a car, which determines how, at what speed, and on which route to go. The same can be said about programs that analyze our behavior on the Internet and measure activity on social networks, search queries, location by comments under photos, and so on.

As data is processed, programs offer users optimal solutions for a particular task. Although these proposals are advisory in nature, people are comfortable following them, because of their notorious “rationality”. As a result, we are faced with a situation where machines make decisions that directly affect our lives. With the further development of technologies, we are entitled to expect that there will be more and more areas of application for such “smart” machines. Moreover, today the most valuable and exciting results in many scientific fields (from genomic research to the Internet of Things) are obtained exactly where big data analysis takes place. And it is clear that this kind of analytics is impossible without the help of machines: a person cannot process such a volume of information, while a machine is completely capable to do so.

Safety and ethics

For several years, there has been heated debate in technological and scientific circles on the topic of the safety and ethics of using artificial intelligence systems. The question is also raised at the state level: in the USA, Europe and Russia there are discussions of the necessary changes in the laws that need to be introduced before AI tightly enters everyday life. Unmanned vehicles are already on the road!

All this leads us to the fact that in some way it is necessary to register responsibility in cases where a tragedy occurs due to a failure of systems and algorithms. The simplest example: who will be responsible for the accident that occurred due to the fault of the AI-driver?

Legislative initiatives

The European Commission is developing the ethical principles of AI, and the House of Lords of Great Britain has created the document “AI in the UK: ready, willing and able?”.

If we summarize the proposed ethical principles, we get that AI should be controlled and useful to society, safe for humans, reliable in operation, and predictable in actions.

All decisions made and AI errors should be transparent to understanding and accessible for analysis. The privacy and personal data of citizens must be protected. Work on the creation of AI should be conducted in a friendly open international environment, without an arms race, and in compliance with safety standards and analysis of all possible risks.

The goals of self-learning and self-reproducing AI systems should be tightly controlled and consistent with universal values, as well as increase the welfare (economic, social, and environmental) of humanity as a whole, and not of one state or organization.

How to combine all these reasonable ethical principles, in particular, tight control over self-learning AI systems, and not slow down their development?

There are no answers yet.

However, you can definitely declare that the development in the field of AI should be controlled by society. Then everyone can make a conclusion for themselves: to use a specific technology, to buy it or not.

The reality at the moment is different. For example, according to Independent, Amazon recognized that company employees listened to customer records to improve and retrain Alexa’s voice assistant. Amazon Alexa’s privacy settings do not allow you to refuse to record your voice.

Fakes, so deep

DeepFake and Zao technologies can insert your face from photos into any video. While swapping Arnold Schwarzenegger with Sylvester Stallone in the Terminator movie may seem harmless, the true depth of these technologies goes beyond, entering a realm where “this person does not exist” becomes a disconcerting reality in the landscape of artificial visual manipulation.

In fake videos, famous people say things that they would never say, and do things that they would never do in public. This technology was very troubling, and the U.S. House of Representatives held a hearing on the risks associated with artificial intelligence and DeepFake, and Virginia expanded the law against revenge porn by banning the use of DeepFake.

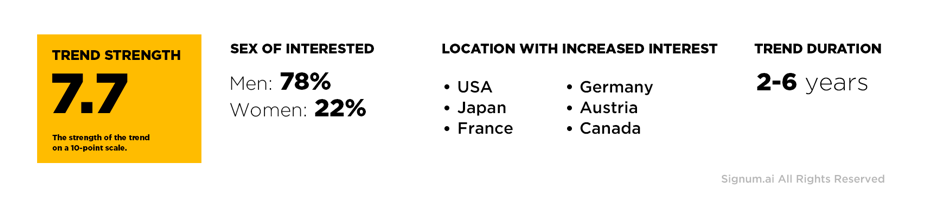

Recently, the Signum.ai analytics engine called technology for fighting deepfakes as a strong uptrend.

At least over the next two years, we can expect a widespread start of the development of special technologies for the recognition of fake information. By whose forces do you think this will become possible? Of course, artificial intelligence.

Other aspects of unethical use

The problem of hacking, selling, or unauthorized and unethical use of databases is not new. Back in 2010, Netflix canceled a contest with a prize of $1 million for the development of simple recommendation algorithms due to a legal warning about the impossibility of transferring customer data to third-party developers. Today, using AI algorithms for Big Data analysis and large neural networks, it is possible to obtain new sensitive personal information from seemingly anonymous data. Is there a violation of the law? No. Are ethical principles violated? Yes.

A couple more examples of the not entirely ethical use of AI algorithms:

Targeted advertising using recommender systems with AI has become so personalized that it balances on the verge of personal safety. Through a mobile phone with Wi-Fi enabled, you can accurately identify the identity and determine where a person is. Using data from various sensors (such as an accelerometer and a gyroscope) inside your phone, simple neural networks can determine what you are doing at any given time.

Cable television not only brings a huge variety of channels to your home but also captures (unlike conventional terrestrial television) what you watch. Further, machine learning algorithms add general demographic information (the number of adults and children in your family, your age, etc.), analyze the information, and give recommendations on targeted advertising, which will be different for you and your neighbor.

Seems nothing wrong? However, society does not know how this data is used further. Will they be sold to anyone? Facebook would not have had a scandal with Cambridge Analytica if they had not tried to sell more advertising and increase their profits.

Unlike commercial companies, universities pay great attention to ethical issues in research. Before starting any research at the university, you need to fill out a bunch of papers on the supervisory board, which proves that your research (including the development of machine learning methods & AI) does not contradict the principles of ethics.

The smartest solution

It is necessary to monitor compliance with AI ethical standards in the business. It will be a long way to introduce such norms into society, possibly a generation-long. At the end of this path, unauthorized use and analysis of data, including using AI, should be roughly equated to the theft of wallets or handbags in the subway.

Unfortunately, today excessive attention from the media is paid to AI technologies. Society has raised expectations, sometimes bordering on magic. Hence the problem of the unethical use of AI by unethical human experts comes.

Today, ethical issues in the use of artificial intelligence technology primarily depend on the ethical behavior of developers and users of AI.

More useful content on our social media: