We Value Your Anonymity: Differential Privacy Explained by Signum.AI

April 5, 2024 5 min. read

Try AI-Driven Insights

Monitoring for Free

Discover new business ideas and growth opportunities using

our AI-powered insights monitoring tool

Nowadays, brands use more data than ever to build successful marketing campaigns. And for good reason — it’s way more logical to base your ads on accurate statistical data and provide only relevant offers than to take a shot in the dark by relying on your intuition.

Users’ private info is a tricky topic, though. The problem with it is that if it leaks, it can cause a lot of damage. Scammers are constantly on the lookout for unprotected data they can hack and abuse for their own purposes. For example, some want to identify home addresses of wealthy folks for robbery, others pursue racist harassment campaigns or target undocumented immigrants. Whatever the case, it’s pretty risky to have your data within public access, so when most users have a chance to hide their personal information, they do it — and we can’t judge them for it.

This also explains Apple’s recent updates regarding app and mail confidentiality — a logical response to customers’ popular demand (even though it looks like a spoke in marketers’ wheels ?). Effective ad campaigns, however, can’t be created without this private data. As a result, we end up with companies looking for ways to gather users’ private data and clients wanting to keep it hidden. The only way to satisfy both of these contradicting needs is through differential privacy (DP).

With differential privacy, brands can still collect useful data about customers without compromising their preference for confidentiality.

So What the Heck is Differential Privacy?

The safest and most reliable way to protect your data on the web. The method uses special noise — random mathematical errors — that prevents hackers from accessing user info. The algorithms intentionally inject these “slip-ups” into a dataset to discard any potential scammers trying to trace an individual’s identity. This way, it’s more difficult (and almost impossible) to connect the dots of a user’s information.

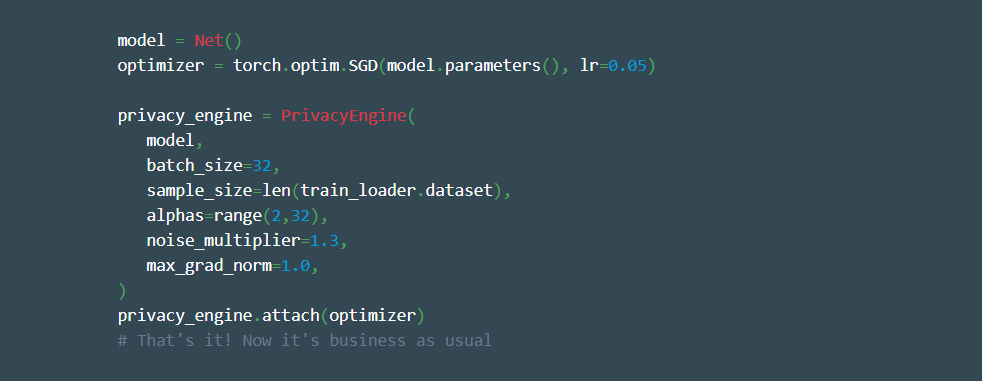

Here’s how it looks in the coding language:

Credits to Facebook AI

How Does it Work?

To better understand how differential privacy works, let’s take a look at an example. Say you want to find out how many people do something illegal or embarrassing, like gossiping. The best way to do that is to set up a survey, asking “Do you gossip about your best friend?” with “yes” and “no” buttons below it. Most people aren’t exactly willing to share this kind of info and won’t tell the truth — unless nobody can trace their identity. To protect it, smart privacy algorithms introduce the above-mentioned noise before sending the answer to a server.

Let’s say Jane is quite a gossip girl, and she clicks on the “yes” button. The system virtually flips a coin, and from there, there are two options:

— if it’s heads, the algorithm sends Jane’s real answer to the server;

— if it’s tails, it flips another coin. Then, it sends “yes” if it’s tails and “no” if heads.

This way, there’s a 25% chance that Jane is not a gossip girl and that the answer sent to the server is incorrect. Having collected responses from all participants of the survey, you know that the overall percentage from the group might be 25% false — and build your further strategy from there. At the same time, you still possess an average group result (which you can use to better understand the audience) but don’t know each individual’s answer. As a result, you get your conclusion without interfering with participants’ privacy.

You may be thinking, “Wow, pretty complicated. Why not use other methods of privacy protection?” Unfortunately, conventional methods have proven to be ineffective in keeping your info anonymous.

Why Traditional Privacy Protection Methods Don’t Work

Data anonymization and hashing used to be a common way of safeguarding users’ personal information. But a series of experiments have shown that you can’t just take the data, remove people’s names & dates of birth and call it a day.

In 2005, the popular streaming platform Netflix held a competition where they asked people to predict users’ movie ratings based on their previous rating history and film preferences. But it was prematurely discarded due to privacy issues. Computer specialists from the University of Texas within hours identified hashed users, proving hashing wasn’t an effective protection method — all it took was matching user data to information from the IMDB database.

Credits to: Simply Explained

Similar linkage attacks happen all the time: seemingly anonymous pieces of information are combined in unexpected ways to reveal a person’s identity. In fact, it’s been estimated that 87% of Americans can easily be identified with only three pieces of information: birth day, gender and zip code. So much for anonymity, huh?

Thinking about how unprotected our data actually is can give you chills. With only a few clicks, it’s possible to identify someone’s medical records — like what happened to the governor of Massachusetts, William Weld. The state’s Group Insurance Commission published statistical data on employees’ hospital visits. Their names, addresses and other private info were, of course, removed. Yet that didn’t stop specialist Latanya Sweeney from revealing Weld’s identity. After combining all available data (public medical & voter records), there was only one person who had the same gender, lived in the same zip code and had the same date of birth as the governor. And just like that, his medical records were exposed. This, however, wouldn’t be the case had differential privacy been implemented.

Okay, now we know what differential privacy is and how it works — time to explore some real-life use cases.

Where Differential Privacy Can Be Applied

Think of any industry in the digital world. Chances are, differential privacy can be applied there. From social networks to location-related platforms — using differential privacy is an absolute must if you want to protect your users’ data.

Marketing giants have been applying DP for a while now. For instance, Facebook gathers behavioral data for targeted ad campaigns while Amazon accumulates info that helps to personalize clients’ shopping preferences. Apple is on the list, too — it introduced differential privacy back in iOS 10 and is still a huge fan, if you will. ?

The company collects valuable insights from its devices (including iPhones, iPads and so on). They use differential privacy to gather data on what websites take up the most energy, what non-apple-dictionary words people use in specific scenarios and what images are favored in different contexts. All of this is done without sharing their audiences’ private data with the public.

World-known brands constantly work on making DP more accessible. For example, Facebook has announced the release of an open-source DP library, Opacus.

Unlike its precursors, the platform is:

• simpler (especially the interface);

• quicker (thanks to the implementation of vectorized computation over previously used micro-bathing);

• more flexible (users are now able to easily prototype their ideas by mixing codes from PyTorch and Python).

The implementation of Opacus is supposed to assist engineers and researchers in adopting differential privacy in machine learning and encourage DP research in general. More about Opacus here. ?

Wrapping Up

While differential privacy has been acknowledged as a standardized data protection technique by top-notch companies, it’s still quite a novelty — although it’s just a matter of time before that changes.

At the same time, using DP for your business is a real must-do as it’ll ensure your clients’ anonymity without taking away precious data about them.

So don’t hesitate to give it a shot. 🙂

More useful content on our social media: